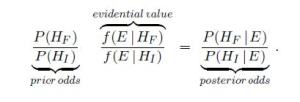

I saw some tweets last night alluding to a technique for Bayesian forensics, the basis for which published papers are to be retracted: So far as I can tell, your paper is guilty of being fraudulent so long as the/a prior Bayesian belief in its fraudulence is higher than in its innocence. Klaassen (2015):

“An important principle in criminal court cases is ‘in dubio pro reo’, which means that in case of doubt the accused is favored. In science one might argue that the leading principle should be ‘in dubio pro scientia’, which should mean that in case of doubt a publication should be withdrawn. Within the framework of this paper this would imply that if the posterior odds in favor of hypothesis HF of fabrication equal at least 1, then the conclusion should be that HF is true.” Now the definition of “evidential value” (supposedly, the likelihood ratio of fraud to innocent), called V, must be at least 1. So it follows that any paper for which the prior for fraudulence exceeds that of innocence, “should be rejected and disqualified scientifically. Keeping this in mind one wonders what a reasonable choice of the prior odds would be.”(Klaassen 2015)

Now the definition of “evidential value” (supposedly, the likelihood ratio of fraud to innocent), called V, must be at least 1. So it follows that any paper for which the prior for fraudulence exceeds that of innocence, “should be rejected and disqualified scientifically. Keeping this in mind one wonders what a reasonable choice of the prior odds would be.”(Klaassen 2015)

Yes, one really does wonder!

“V ≥ 1. Consequently, within this framework there does not exist exculpatory evidence. This is reasonable since bad science cannot be compensated by very good science. It should be very good anyway.”

What? I thought the point of the computation was to determine if there is evidence for bad science. So unless it is a good measure of evidence for bad science, this remark makes no sense. Yet even the best case can be regarded as bad science simply because the prior odds in favor of fraud exceed 1. And there’s no guarantee this prior odds ratio is a reflection of the evidence, especially since if it had to be evidence-based, there would be no reason for it at all. (They admit the computation cannot distinguish between QRPs and fraud, by the way.) Since this post is not yet in shape for my regular blog, but I wanted to write down something, it’s here in my “rejected posts” site for now.

Added June 9: I realize this is being applied to the problematic case of Jens Forster, but the method should stand or fall on its own. I thought rather strong grounds for concluding manipulation were already given in the Forster case. (See Forster on my regular blog). Since that analysis could (presumably) distinguish fraud from QRPs, it was more informative than the best this method can do. Thus, the question arises as to why this additional and much shakier method is introduced. (By the way, Forster admitted to QRPs, as normally defined.) Perhaps it’s in order to call for a retraction of other papers that did not admit of the earlier, Fisherian criticisms. It may be little more than formally dressing up the suspicion we’d have in any papers by an author who has retracted one(?) in a similar area. The danger is that it will live a life of its own as a tool to be used more generally. Further, just because someone can treat a statistic “frequentistly” doesn’t place the analysis within any sanctioned frequentist or error statistical home. Including the priors, and even the non-exhaustive, (apparently) data-dependent hypotheses, takes it out of frequentist hypotheses testing. Additionally, this is being used as a decision making tool to “announce untrustworthiness” or “call for retractions”, not merely analyze warranted evidence.

Klaassen, C. A. J. (2015). Evidential value in ANOVA-regression results in scientific integrity studies. arXiv:1405.4540v2 [stat.ME]. Discussion of the Klaassen method on pubpeer review: https://pubpeer.com/publications/5439C6BFF5744F6F47A2E0E9456703