Sit down with students or friends and ask them what’s wrong with this study–or just ask yourself–and it will likely leap out. Now I’ve only read the paper quickly, and know nothing of oxytocin (OT) research. That’s why this is in the “Rejected Posts” blog. Plus, I’m writing this really quickly.

You see, I noticed a tweet about how non-statistically significant results are often stored in filedrawers, rarely published, and right away was prepared to applaud the authors for writing on their negative result. Now that I see the nature of the study, and the absence of any critique of the experiment itself (let alone the statistics), I am less impressed. What amazes me about so many studies is not that they fail to replicate but that the glaring flaws in the study aren’t considered! But I’m prepared to be corrected by those who do serious oxytocin research.

In a nutshell: Treateds get OT nasal spray, controls get a placebo spray; you’re told they’re looking for effects on sexual practices, when actually they’re looking for effects on trust bestowed upon experimenters with your answers).

The instructions were the follows: “You will now perform a task on the computer. The instruction concerning this task will appear on screen but if you have any question, do not hesitate. At the end of the computer test, you will have to fill a questionnaire that is in the envelope on your desk. As we want to examine if oxytocin has an influence on sexual practices and fantasies, do not be surprised by the intimate or awkward nature of the questions. Please answer as honestly as possible. You will not be judged. Also do not be afraid of being sincere in your answers, I will not look at your questionnaire, I swear it. It will be handled by one of the guy in charge of the optical reading device who will not be able to identify you (thanks to the coding system). At the end of the experiment, I will bring him all the questionnaires. I will just ask you to put the questionnaire back in the envelope once it is completed. You may close the envelope at the end and, if you want, you may even add tape. There is a tape dispenser on your desk”. There is some examples of questions they were asked to answer: “What was your wildest sex experiment ?”, “Are you satisfied with your sex life? Could you describe it? (frequency, quality,…)” Please report on a 7-point Likert scale (1 = not at all, it disgusts me à 7 = very much, I really like) your willingness to be involved in the following sexual practices: using sex toys, doing a threesome, having sex in public, watch other people having sex, watch porn before or during a sexual intercourse,…”

Imagine you’re a subject in the study. Is there a reason to care if the researcher knows details of your sex life? The presumption is that you do care. But anyone who really cared wouldn’t reveal whatever they deemed so embarrassing. But wait, there’s another crucial element to this experiment.

We’re told: “we want to examine if oxytocin has an influence on sexual practices and fantasies“. You’ve been sprayed with either OT or placebo, and I assume you don’t know which. Suppose OT does influence willingness to engage in wild sex experiments. Being sprayed today couldn’t very well change your previous behavior. So unless they had asked you last week (without spray) and now once again with spray, they can’t be looking for changes on actual practice. But OT spray could make you more willing to say you’re more willing to engage in “the following sexual practices: using sex toys, doing a threesome, having sex in public,…etc. etc.” It could also influence feelings right now, i.e., how satisfied you feel now that you’ve been “treated”. So since the subject reasons this must be the effect they have in mind, only scores on the “willingness” and “current feelings” questions could be picking up on the OT effect. But high numbers on willingness and feelings questions don’t reflect actual behaviors–unless the OT effect extends to exaggerating about past behaviors, that is, lying about them, in which case, once again, your own actual choices and behaviors in life are not revealed by the questionnaire. Given the tendency of subjects to answer as they suppose the researcher wants, I can imagine higher numbers on such questions (than if they weren’t told they’re examining if OT has an influence on sexual practices). But since the numbers don’t, indeed, can’t reflect true effects on sexual behavior, there’s scarce reason to regard them as private information revealed only to experimenters you trust. I’ll bet almost no one uses the tape*.

There are many, alternative criticisms of this study. For example, realizing they can’t be studying the influence of sex practices, you already mistrust the experimenter. Share yours.

Let me be clear: I don’t say OT isn’t related to love and trust––it’s active in childbirth, nursing, and, well…whatever. It is, after all, called the ‘love hormone’. My kvetch is with the capability of this study to discern the intended effect.

I say we need to publish analyses showing what’s wrong with the assumption that a given experiment is capable of distinguishing the “effects” of the “treatment” of interest. And what about those Likert scales! These aren’t exactly genuine measurements merely because they’re quantitative.

*It would be funny to look for a correlation between racy answers and tape.

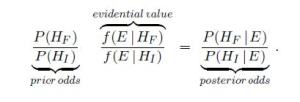

Now the definition of “evidential value” (supposedly, the likelihood ratio of fraud to innocent), called V, must be at least 1. So it follows that any paper for which the prior for fraudulence exceeds that of innocence,

Now the definition of “evidential value” (supposedly, the likelihood ratio of fraud to innocent), called V, must be at least 1. So it follows that any paper for which the prior for fraudulence exceeds that of innocence,